Why Reinforcement Learning Feels Different (And Why That’s Good)

If you’ve worked with supervised learning, you’re used to a straightforward paradigm: show the model labeled examples, and it learns to predict labels for new data. Unsupervised learning asks the model to find patterns in unlabeled data. Reinforcement Learning (RL) flips the script entirely.

In RL, there are no labeled examples. Instead, you have an agent that takes actions in an environment and receives rewards. The agent’s goal is to determine which actions yield the highest cumulative reward over time. It’s learning through trial and error, discovering optimal behavior through experience.

This makes RL uniquely suited to sequential decision-making problems, including game AI, robotics, recommendation systems, resource allocation, and autonomous navigation. But the learning curve is steep. Abstract concepts like “value functions” and “policies” can feel overwhelming without hands-on experience.

That’s where multi-armed bandits and GridWorld come in. These classic environments are deliberately simple, stripping away complexity so you can build rock-solid intuition about RL fundamentals. Master these, and you’ll understand 80% of what makes modern deep RL work.

The RL Vocabulary You Actually Need

Before diving into code, let’s nail down the core concepts. Don’t memorize these—just get a feel for what they mean:

State (s): The current situation the agent observes. In a bandit problem, there’s only one state. In GridWorld, it’s the agent’s position on the grid.

Action (a): A choice the agent can make. Pulling a slot machine lever, moving up/down/left/right.

Reward ( r ): Immediate feedback from the environment. Bandits give you a payout (or don’t). GridWorld gives you +1 for reaching the goal, -1 for hitting a trap, 0 otherwise.

Policy (π): The agent’s strategy—a mapping from states to actions. “When in state s, take action a.”

Value Function (V or Q): How good it is to be in a state (V) or take a specific action in a state (Q). These are the agent’s long-term expectations of cumulative reward.

Exploration vs. Exploitation: The fundamental tradeoff. Exploit what you know works. Explore to discover potentially better options. Nail this balance, and you’ve solved half of RL.

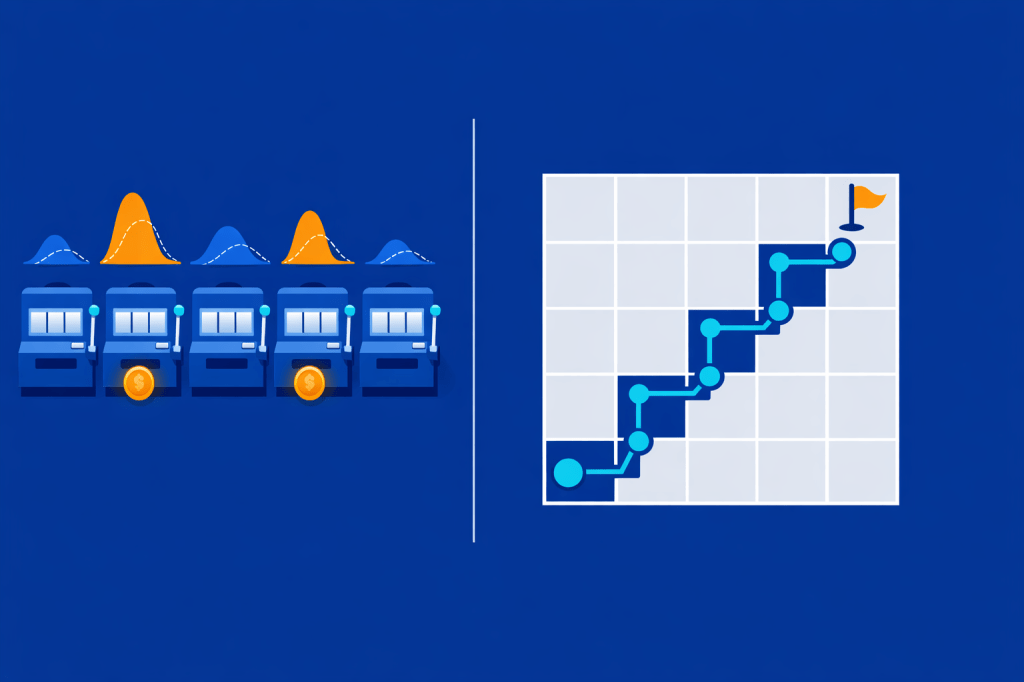

Why Start With Bandits? The Single-State Sandbox

The multi-armed bandit is RL at its simplest: one state, multiple actions, uncertain rewards. Imagine a row of slot machines (bandits), each with a different hidden payout distribution. You can pull one lever per round. Which do you pull?

If you knew which bandit paid out best, you’d always pull that one (pure exploitation). But you don’t know at the start. You have to try different bandits (exploration) to estimate their payouts, then focus on the best one (exploitation).

Bandits teach you:

- Exploration strategies: ε-greedy, Upper Confidence Bound (UCB), softmax selection

- Value estimation: How to track and update expected rewards

- Regret minimization: The cost of exploring suboptimal actions

Here’s a simple bandit environment in Python:

import numpy as np

class MultiArmedBandit:

def __init__(self, n_arms=5):

self.n_arms = n_arms

# Each arm has a true mean reward (hidden from the agent)

self.true_means = np.random.uniform(0, 1, n_arms)

def pull(self, arm):

"""Pull an arm and get a noisy reward."""

return np.random.normal(self.true_means[arm], 0.1)

def optimal_arm(self):

return np.argmax(self.true_means)

Now let’s implement ε-greedy exploration:

class EpsilonGreedyAgent:

def __init__(self, n_arms, epsilon=0.1):

self.n_arms = n_arms

self.epsilon = epsilon

self.q_values = np.zeros(n_arms) # Estimated value of each arm

self.action_counts = np.zeros(n_arms) # How many times we've pulled each arm

def select_action(self):

if np.random.random() < self.epsilon:

return np.random.randint(self.n_arms) # Explore: random action

else:

return np.argmax(self.q_values) # Exploit: best known action

def update(self, action, reward):

"""Update Q-value estimate after receiving a reward."""

self.action_counts[action] += 1

n = self.action_counts[action]

# Incremental average: Q_new = Q_old + (1/n) * (reward - Q_old)

self.q_values[action] += (reward - self.q_values[action]) / n

Run it for 1000 steps:

bandit = MultiArmedBandit(n_arms=5)

agent = EpsilonGreedyAgent(n_arms=5, epsilon=0.1)

total_reward = 0

for step in range(1000):

action = agent.select_action()

reward = bandit.pull(action)

agent.update(action, reward)

total_reward += reward

print(f"Total reward: {total_reward:.2f}")

print(f"Agent's best arm: {np.argmax(agent.q_values)}")

print(f"True best arm: {bandit.optimal_arm()}")

Why this matters: You’ve just implemented the core RL loop, select action, observe reward, update estimates. The ε-greedy strategy balances exploration (10% random) with exploitation (90% greedy). This same pattern appears everywhere in RL.

Beyond ε-Greedy: UCB and Softmax

ε-greedy is simple but inefficient—it explores randomly, wasting pulls on clearly bad arms. Two smarter strategies:

Upper Confidence Bound (UCB)

UCB says: “Pull the arm with the highest optimistic estimate.” It balances exploitation (high Q-value) with uncertainty (arms you haven’t tried much):

class UCBAgent:

def __init__(self, n_arms, c=2.0):

self.n_arms = n_arms

self.c = c # Exploration constant

self.q_values = np.zeros(n_arms)

self.action_counts = np.zeros(n_arms)

self.total_steps = 0

def select_action(self):

self.total_steps += 1

# Try each arm at least once

if self.total_steps <= self.n_arms:

return self.total_steps - 1

# UCB formula: Q(a) + c * sqrt(ln(t) / N(a))

ucb_values = self.q_values + self.c * np.sqrt(

np.log(self.total_steps) / (self.action_counts + 1e-5)

)

return np.argmax(ucb_values)

def update(self, action, reward):

self.action_counts[action] += 1

n = self.action_counts[action]

self.q_values[action] += (reward - self.q_values[action]) / n

UCB explores intelligently—it favors uncertain arms early, then converges to the best arm as uncertainty decreases.

Softmax (Boltzmann) Exploration

Softmax samples actions proportionally to their Q-values:

class SoftmaxAgent:

def __init__(self, n_arms, temperature=1.0):

self.n_arms = n_arms

self.temperature = temperature

self.q_values = np.zeros(n_arms)

self.action_counts = np.zeros(n_arms)

def select_action(self):

# Softmax probabilities: exp(Q/T) / sum(exp(Q/T))

exp_values = np.exp(self.q_values / self.temperature)

probabilities = exp_values / np.sum(exp_values)

return np.random.choice(self.n_arms, p=probabilities)

def update(self, action, reward):

self.action_counts[action] += 1

n = self.action_counts[action]

self.q_values[action] += (reward - self.q_values[action]) / n

High temperature: more exploration (uniform random). Low temperature: more exploitation (greedy). You can anneal the temperature over time to shift from exploration to exploitation.

GridWorld: Adding States and Sequences

Bandits teach exploration, but they’re stateless—every decision is independent. GridWorld introduces state transitions and episodic tasks: the agent navigates a grid, taking actions that change its state, accumulating rewards until reaching a terminal state (goal or trap).

Here’s a minimal GridWorld:

class GridWorld:

def __init__(self, size=5):

self.size = size

self.start = (0, 0)

self.goal = (size-1, size-1)

self.traps = [(1, 1), (2, 3)]

self.state = self.start

def reset(self):

"""Start a new episode."""

self.state = self.start

return self.state

def step(self, action):

"""Take action (0=up, 1=down, 2=left, 3=right), return (next_state, reward, done)."""

x, y = self.state

if action == 0: y = max(0, y - 1) # Up

elif action == 1: y = min(self.size - 1, y + 1) # Down

elif action == 2: x = max(0, x - 1) # Left

elif action == 3: x = min(self.size - 1, x + 1) # Right

self.state = (x, y)

if self.state == self.goal:

return self.state, 1.0, True # Reached goal: +1 reward, episode ends

elif self.state in self.traps:

return self.state, -1.0, True # Hit trap: -1 reward, episode ends

else:

return self.state, 0.0, False # Normal step: 0 reward, episode continues

Now the agent needs a policy, a strategy for choosing actions in each state. And it needs to learn value functions to estimate long-term rewards.

Q-Learning: The Classic Tabular Algorithm

Q-learning learns the Q-function: Q(s, a) = expected cumulative reward from taking action a in state s, then following the optimal policy.

The Q-learning update rule:

Q(s, a) ← Q(s, a) + α * [r + γ * max_a' Q(s', a') - Q(s, a)]

- α (alpha): learning rate (how much to update)

- γ (gamma): discount factor (how much to value future rewards)

- r: immediate reward

- s’: next state

- max_a’ Q(s’, a’): value of the best action in the next state

Here’s Q-learning for GridWorld:

class QLearningAgent:

def __init__(self, n_states, n_actions, alpha=0.1, gamma=0.99, epsilon=0.1):

self.n_states = n_states

self.n_actions = n_actions

self.alpha = alpha

self.gamma = gamma

self.epsilon = epsilon

self.q_table = np.zeros((n_states, n_actions))

def state_to_index(self, state, grid_size=5):

"""Convert (x, y) to flat index."""

return state[0] * grid_size + state[1]

def select_action(self, state, grid_size=5):

"""ε-greedy action selection."""

state_idx = self.state_to_index(state, grid_size)

if np.random.random() < self.epsilon:

return np.random.randint(self.n_actions)

else:

return np.argmax(self.q_table[state_idx])

def update(self, state, action, reward, next_state, done, grid_size=5):

"""Q-learning update."""

state_idx = self.state_to_index(state, grid_size)

next_state_idx = self.state_to_index(next_state, grid_size)

# Q-learning target: r + γ * max_a' Q(s', a')

if done:

target = reward

else:

target = reward + self.gamma * np.max(self.q_table[next_state_idx])

# Update Q-value

self.q_table[state_idx, action] += self.alpha * (target - self.q_table[state_idx, action])

Train it:

env = GridWorld(size=5)

agent = QLearningAgent(n_states=25, n_actions=4)

for episode in range(1000):

state = env.reset()

done = False

while not done:

action = agent.select_action(state, grid_size=5)

next_state, reward, done = env.step(action)

agent.update(state, action, reward, next_state, done, grid_size=5)

state = next_state

print("Learned Q-values:\n", agent.q_table)

After training, the Q-table encodes the optimal policy: for each state, take the action with the highest Q-value.

Real-World Connections: Why These “Toys” Matter

Bandits → Production Systems

Multi-armed bandits are everywhere:

- A/B testing: Each variant is an arm. UCB and Thompson Sampling power modern experimentation platforms.

- Recommendation systems: Which content to show next? Contextual bandits (bandits with state) model user preferences.

- Online advertising: Which ad to display? Real-time bidding uses bandit algorithms to maximize click-through rates.

Companies like Netflix, Spotify, and Google use bandit-based algorithms to personalize user experiences at scale. The exploration-exploitation tradeoff you learned in 10 lines of code powers billion-dollar businesses.

GridWorld → Navigation and Control

GridWorld concepts generalize to:

- Robotics: Replace grid cells with sensor readings, actions with motor commands. The Q-learning update rule is identical.

- Autonomous vehicles: State = vehicle position + sensor data. Actions = steering, acceleration. Reward = safe, efficient driving.

- Game AI: AlphaGo and AlphaZero combine value-based learning concepts with deep neural networks from the start.

GridWorld strips away noise so you can debug your algorithm. Once it works on a 5×5 grid, scaling to high-dimensional continuous spaces is “just” an engineering problem (okay, a hard one—but the principles hold).

Common Pitfalls (And How to Avoid Them)

1. Misunderstanding Exploration vs. Exploitation

Symptom: Agent converges to suboptimal policy early.

Fix: Increase exploration (higher ε in ε-greedy, higher temperature in softmax). Use UCB for principled exploration. Anneal exploration over time—explore more early, exploit more late.

2. Incorrect Reward Shaping

Symptom: Agent learns weird behavior (e.g., spinning in place to farm rewards).

Fix: Rewards should align with your true goal. Sparse rewards (only at goal) are hard but honest. Dense rewards (every step) can introduce unintended incentives. Test your reward function.

3. Convergence Issues

Symptom: Q-values explode or never stabilize.

Fix: Lower learning rate α. Ensure γ < 1 (otherwise infinite rewards). Check for bugs in state indexing. Visualize Q-values per episode to spot divergence early.

4. Overfitting to Deterministic Environments

Symptom: Agent works in training environment, fails with slight changes.

Fix: Add stochasticity. Make actions succeed with probability < 1. Randomize start positions. Test on held-out environment variations.

5. Poor Experiment Design

Symptom: Can’t reproduce results. Can’t compare algorithms fairly.

Fix: Run multiple seeds (at least 5). Report mean ± std. Log all hyperparameters. Use the same environment for all agents. Plot confidence intervals.

The Path Forward: From Tabular to Deep RL

Once you’ve mastered bandits and GridWorld, you’ve built the mental scaffolding for everything else in RL:

Policy Gradient Methods (REINFORCE, PPO): Instead of learning Q-values, directly optimize the policy. Same exploration-exploitation tradeoff, different update rule.

Actor-Critic Methods (A3C, SAC): Combine value-based (critic) and policy-based (actor) approaches. Still using the core concepts you learned here.

Deep Q-Networks (DQN): Replace the Q-table with a neural network. The Q-learning update rule? Identical. You’re just approximating Q(s, a) with a function instead of a table.

Model-Based RL: Learn a model of the environment (predict next state and reward), then plan using that model. Your GridWorld understanding of state transitions maps directly.

Why mastering the basics matters: RL research moves fast, but the foundations are stable. Papers on arXiv in 2026 still reference bandits and GridWorld. Industry practitioners still use ε-greedy. The algorithms get more sophisticated, but the core ideas—exploration, value functions, policies—don’t change.

Where RL Is Heading in 2026 and Beyond

The RL landscape is evolving rapidly:

Foundation models meet RL: Large language models fine-tuned with RL from human feedback (RLHF) power ChatGPT and Claude. The reward signal comes from human preferences, but the algorithm is PPO—a policy gradient method built on the same principles you learned here.

Sim-to-real transfer: Training robots in simulation (GridWorld on steroids) then deploying to the real world. Research teams and companies are exploring RL approaches for locomotion and autonomous driving.

Offline RL: Learning from fixed datasets without exploration. Critical for healthcare, finance, and other domains where exploration is expensive or dangerous.

Multi-agent RL: Agents learning to cooperate or compete. Game theory meets reinforcement learning. Think Dota 2 bots, autonomous drone swarms, traffic control systems.

Hierarchical RL: Breaking complex tasks into subgoals. An agent learns a high-level policy (which subgoal to pursue) and low-level policies (how to achieve each subgoal). Your GridWorld navigation is one hierarchy level; planning a cross-country trip is many.

The common thread? They all build on the fundamentals. Exploration, value estimation, policy optimization. If you understand bandits and GridWorld, you can read any RL paper published today and grasp the core ideas within an hour.

Leave a comment