For a while now, I’ve been grinding through reinforcement learning theory, value functions, policy gradients, Bellman equations, exploration strategies, the whole stack. And like a lot of people, I hit that wall where the math made sense on paper, but the intuition wasn’t sticking. So I decided to flip the script.

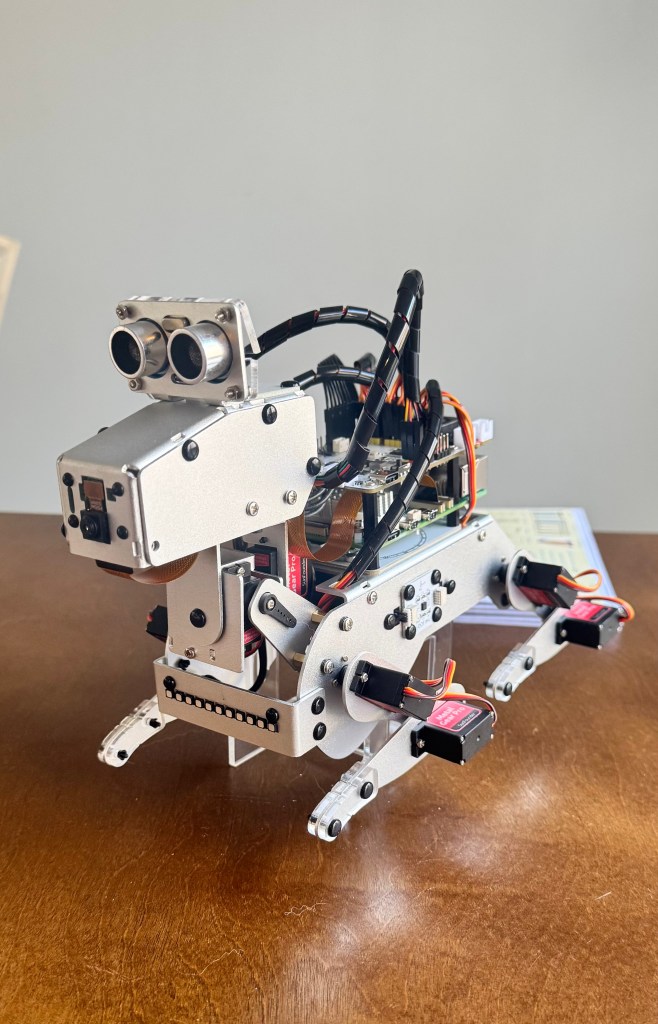

Instead of forcing myself deeper into abstract examples, I’m going to ground RL in a real world project: teaching my Sunfounder PiDog to improve its walking gait using reinforcement learning.

This project is my attempt to make RL tangible, something I can see, hear, and debug in the physical world.

Why Gait Tuning?

Quadruped robots are perfect RL playgrounds. They’re unstable, noisy, unpredictable, and full of nonlinear dynamics, exactly the kind of environment where RL shines.

The PiDog comes with a default walking gait, but it’s far from optimal:

- It wobbles

- It sometimes stumbles

- It wastes energy

- It doesn’t walk in a straight line

That’s not a bug, that’s an opportunity.

With RL, I can let the robot discover better gait parameters through trial-and-error. No hand tuned PID loops. No manually crafted trajectories. Just learning.

What the Project Actually Does

The idea is simple:

-

Start with a baseline gait. The PiDog walks using its default parameters.

-

Let an RL agent tweak the gait. Each episode, it adjusts things like:

- step length

- step height

- leg phase offsets

- body tilt compensation

-

Run the gait for a few seconds. The robot physically walks forward.

-

Measure performance

- How far did it move

- How stable was the IMU

- Did it stumble

-

Reward the agent. More forward motion + more stability = higher reward.

-

Repeat. Over dozens of episodes, the gait visibly improves.

This is RL in its purest form:

Try something → observe outcome → update policy → try again.

What It Looks Like in Practice

The coolest part is how visible the learning is.

Episodes 1–5: Chaos

- legs out of sync

- wobbling

- barely moving forward

Episodes 6–15: Patterns emerge

- some gaits look “almost right”

- wobble decreases

- forward motion becomes consistent

Episodes 20–40: Optimization

- smoother stride

- better balance

- fewer IMU spikes

Episodes 40+: Confidence

- stable, rhythmic gait

- noticeably faster

- looks intentional rather than mechanical

Watching the PiDog improve is like watching a puppy learn to walk with purpose.

Why This Helps Me Learn RL

This project makes me face the real challenges of RL: noisy sensors, delayed rewards, imperfect resets, risky exploration, important reward shaping, safety constraints, and sample efficiency. Textbooks often overlook these, but robots don’t. By tuning gait end-to-end, I memorably reinforce essential RL concepts.

What I’ll Be Building Next

In the next post, I’ll break down:

- the state vector I’m using

- the action space (continuous adjustments)

- the reward function

- the training loop

- How I’m logging IMU + distance data

- How I’m preventing the robot from hurting itself

- How I’m iterating on the policy

I’ll also share the full Python implementation once it’s cleaned up.

Why I’m Sharing This

If you’re learning RL and feeling stuck in the theory, I want to show you that you don’t need a massive GPU cluster to make RL real.

A $100 robot dog and a few lines of Python can teach you more about RL intuition than a dozen academic papers.

This project is my way of bridging the gap between:

- understanding RL, and

- feeling RL

And honestly? It’s just fun.

Leave a comment